Kubernetes Host Networking

Getting started with Akita is as simple as dropping our Agent into your stack. Below are instructions for installing an Akita Agent using Kubernetes host networking. This will allow Akita to monitor any of the pods running on a node in your environment.

To configure a daemonset with the Akita Agent that attaches to the host network you will:

- Meet the prerequisites

- Create an Akita Project

- Generate an API key for the Akita Agent

- Install the Akita Agent

- Add your Akita credentials as a Kubernetes secret

- Create a Daemonset definition to run the Akita Agent using host networking

- Apply the Daemonset configuration and generate an API map

- Verify that the Akita Agent is working

Prerequisites

Akita Account Required

You must have an Akita account to use Akita. You can create an account here.

In order to use this method, you must have:

- Unencrypted data to the API endpoint.

- Host networking enabled in Kubernetes

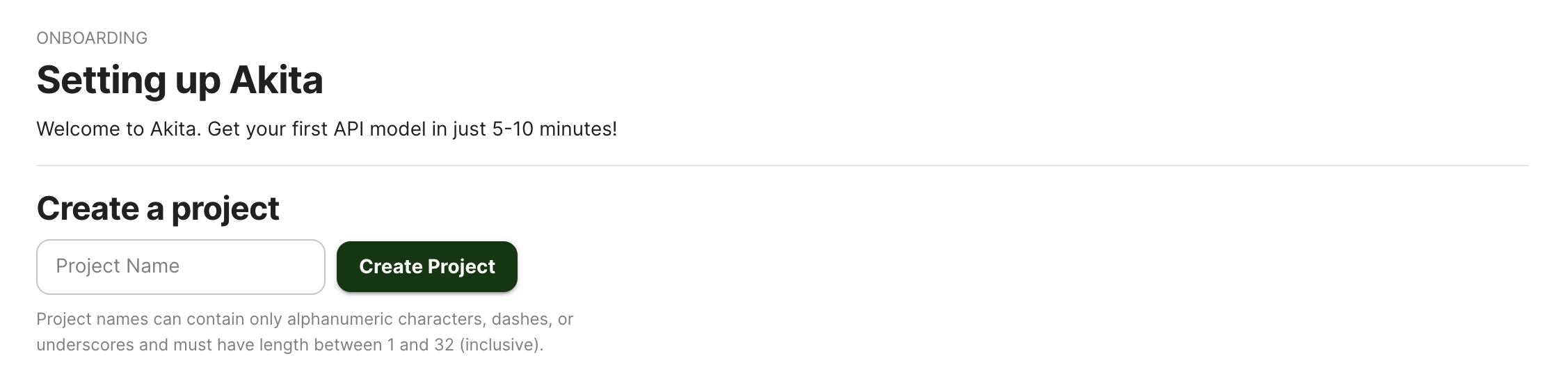

Create a project

Log-in to the Akita App, and go to the Settings page.

Enter a project name and click "Create Project". We suggest naming the project after your app or deployment stack.

Give your project a name that's easy to remember – you'll need it later, when you start the Akita Agent on the CLI.

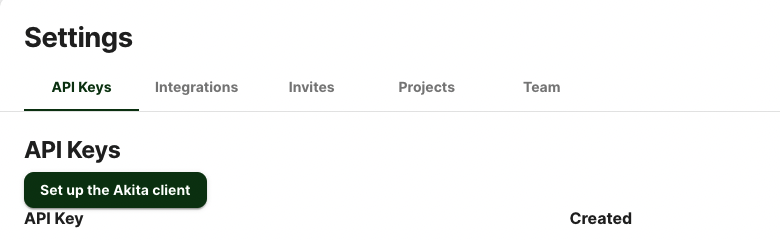

Generate API key

On the same Settings page, locate and click the “API Keys” tab. Click the “Set up the Akita client” button. Copy your API key secret into your favorite password manager or somewhere else you can easily access it. Also note your API key, as you will need it later.

Install the Agent

To install the Akita Agent, run the following:

bash -c "$(curl -L https://releases.akita.software/scripts/install_akita.sh)"

Then log in using akita login.

Add Secret

You will need to add the base64 encoding of the Akita API key ID and secret you created in a previous step as Kubernetes Secrets. To create or update your Akita Secrets in Kubernetes, run the following command:

akita kube secret | kubectl apply -f -

If you run the deployment in a namespace, the secret must be in the same namespace as your deployment. The namespace for the secret can be defined using the --namespace flag:

akita kube secret --namespace <namespace> | kubectl apply -f -

Create Daemonset

Create a new Daemonset controller by entering the following in a new daemonset.yaml file, or adding it to an existing file. This will ensure that each node is running an instance of the pod.

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: akita-capture

spec:

selector:

matchLabels:

name: akita-capture

template:

metadata:

labels:

name: akita-capture

spec:

containers:

- image: akitasoftware/cli:latest

imagePullPolicy: Always

name: akita

args:

- apidump

- --project

- <your project name here>

- --rate-limit

- "200"

env:

- name: AKITA_API_KEY_ID

valueFrom:

secretKeyRef:

name: akita-secrets

key: api-key-id

- name: AKITA_API_KEY_SECRET

valueFrom:

secretKeyRef:

name: akita-secrets

key: api-key-secret

securityContext:

capabilities:

add: ["NET_RAW"]

dnsPolicy: ClusterFirst

hostNetwork: true

restartPolicy: Always

Apply Daemonset

Now, apply the configuration you created with kubectl apply -f <yaml file>. You should see pods named akita-capture-xxxxx scheduled on all of the eligible nodes.

Verify

You can use kubectl logs to verify that the Akita Agent is collecting data.

In the Akita web console, check out the incoming data on the Model page. You should see a map of your API being generated as the Akita Agent gathers data.

Then check out the Metrics and Errors page to get real-time information on the health of your app or service.

Updated almost 3 years ago